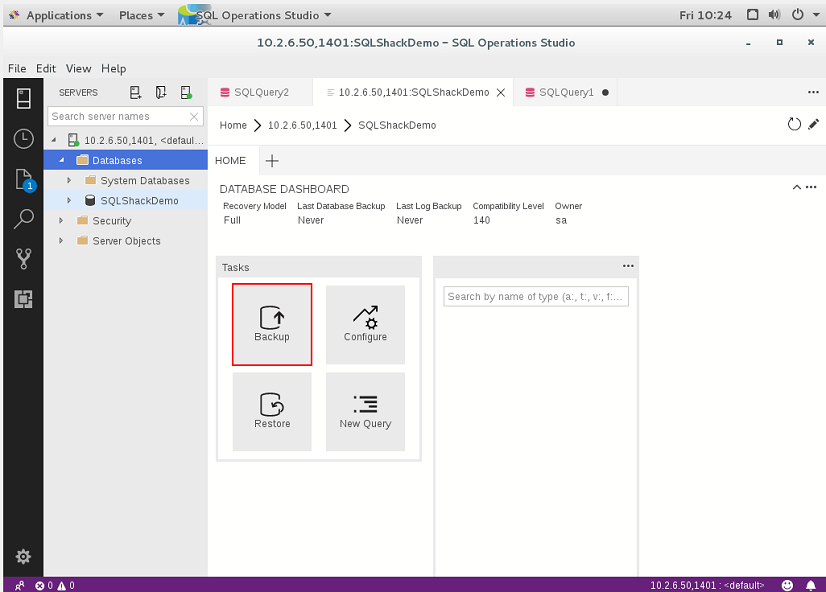

But when you are using the in-memory database, you cannot handle unique indexes on a column. For instance, you might add a unique index on a column in your EF Core model and write a test against your in-memory database to check that it does not let you add a duplicate value. The in-memory database does not support many constraints that are specific to a particular database. (Alternative: DbContext using a SQL Server provider Services.AddDbContext(opt => opt.UseInMemoryDatabase()) DbContext using an InMemory database provider Public void ConfigureServices(IServiceCollection services) You can specify that configuration in the ConfigureServices method of the Startup class in your Web API project: public class Startup EF Core InMemory database versus SQL Server running as a containerĪnother good choice when running tests is to use the Entity Framework InMemory database provider. Being able to create everything from scratch, including an instance of SQL Server running on a container, is great for test environments. When you run integration tests, having a way to generate data consistent with your integration tests is useful. Log.Logger = CreateSerilogLogger(configuration) To add data to the database when the application starts up, you can add code like the following to the Main method in the Program class of the Web API project: public static int Main(string args) Seeding with test data on Web application startup Run the SQL Server Docker image on Linux, Mac, or Windows Ĭonnect and query SQL Server on Linux with sqlcmd As noted, it is also great for development and testing environments so that you can easily run integration tests starting from a clean SQL Server image and known data by seeding new sample data. Having SQL Server running as a container is not just useful for a demo where you might not have access to an instance of SQL Server. The eShopOnContainers application initializes each microservice database with sample data by seeding it with data on startup, as explained in the following section. Once SQL Server is running as a container, you can update the database by connecting through any regular SQL connection, such as from SQL Server Management Studio, Visual Studio, or C# code. When you start this SQL Server container for the first time, the container initializes SQL Server with the password that you provide. However, if you are deploying a multi-container application like eShopOnContainers, it is more convenient to use the docker-compose up command so that it deploys all the required containers for the application. In a similar way, instead of using docker-compose, the following docker run command can run that container: docker run -e 'ACCEPT_EULA=Y' -e -p 5433:1433 -d /mssql/server:2017-latest (Usually you would separate the environment settings from the base or static information related to the SQL Server image.) sqldata: Note that the YAML code has consolidated configuration information from the generic docker-compose.yml file and the file. The SQL Server container in the sample application is configured with the following YAML code in the docker-compose.yml file, which is executed when you run docker-compose up.

Keep in mind that this is a good-enough solution for development and, perhaps, testing but not for production. In this case, they are all in the same container to keep Docker memory requirements as low as possible. In eShopOnContainers, there's a container named sqldata, as defined in the docker-compose.yml file, that runs a SQL Server for Linux instance with the SQL databases for all microservices that need one.Ī key point in microservices is that each microservice owns its related data, so it should have its own database. SQL Server running as a container with a microservice-related database

Having those databases as containers is also great for integration tests, because the database is started in the container and is always populated with the same sample data, so tests can be more predictable. However, for development and test environments, having your databases running as containers is convenient, because you don't have any external dependency and simply running the docker-compose up command starts the whole application. You can have your databases (SQL Server, PostgreSQL, MySQL, etc.) on regular standalone servers, in on-premises clusters, or in PaaS services in the cloud like Azure SQL DB.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed